Businesses have a ton of this kind of stuff that's purely internal to the business, even if you don't care about your employees dropping dead of heart disease, many things that junior employees do are not the hypothetically ideal use of time but you need people to keep doing them in order to circulate knowledge; if you optimize them away you need to make more time in your L&D budget

Is there a name for necessary stuff that happens because of inefficiencies? Like, for example, exercise. If you're a dockworker in the 1700s you might have a bunch of health problems but "sedentary lifestyle" is not one of them. But if you become a dock crane operator in modern times, you need to both do your job *and* go to the gym where you "waste" a bunch of effort in order to exercise, because your job is sitting down now

Boosted by aredridel@kolektiva.social ("Mx. Aria Stewart"):

BleepingComputer@infosec.exchange wrote:

Threat actors are exploiting the recent Claude Code source code leak by using fake GitHub repositories to deliver Vidar information-stealing malware.

aredridel@kolektiva.social ("Mx. Aria Stewart") wrote:

RE: https://social.coop/@chrisjrn/116337927962121277

So very much this. The processes to not do this are not there yet.

The software industries have been due for a reckoning for a while. It's coming because of this.

Boosted by aredridel@kolektiva.social ("Mx. Aria Stewart"):

chrisjrn@social.coop ("Christopher Neugebauer") wrote:

5. Dependence on LLMs is very likely to lead to a crisis of maintenance, because because we're using tools that bias towards the things that we already know lead towards less maintanable code.

Boosted by aredridel@kolektiva.social ("Mx. Aria Stewart"):

chrisjrn@social.coop ("Christopher Neugebauer") wrote:

4. Things are never equal.

4a. LLMs are predisposed to being better at accretion than modification due to inherent limits on static analysis (see Rice's theorem).

Boosted by aredridel@kolektiva.social ("Mx. Aria Stewart"):

chrisjrn@social.coop ("Christopher Neugebauer") wrote:

3. All things being equal, smaller codebases are less complex and easier to interpret than accreted code: accreted code "lithifies" (cf https://www.youtube.com/watch?v=i3nJR7PNgI4) over time.

Boosted by aredridel@kolektiva.social ("Mx. Aria Stewart"):

chrisjrn@social.coop ("Christopher Neugebauer") wrote:

2. All things being equal, accreted code is more likely to be correct than modified code (because the code that existed beforehand might be depended upon in multiple unrelated places)

Boosted by aredridel@kolektiva.social ("Mx. Aria Stewart"):

chrisjrn@social.coop ("Christopher Neugebauer") wrote:

Some things that I hold true about software engineering:

1. Systems can gain modified functionality by accretion (i.e. additional code is created), or through alteration (i.e. new code replaces old code).

aredridel@kolektiva.social ("Mx. Aria Stewart") wrote:

My moment of clarity in the last few weeks was coming back to “Oh right, copyright is a hack, and one that is not serving us, particularly us on the margins”

The moral rights of authorship and the way we situate our legal process of ownership are, actually, kinda at odds. And it entirely misses the idea of a commons, both as community and as a cultural base to draw from.

I've long believed that we, collectively, should own our culture — to have modern myths be Copyright 1972 LucasFilm, the traditional songs we sing Copyright 1922, now owned by Warner/Chappell Music is one of the things I find repugnant about the situation we find ourselves in.

That said, reconciling that with the behavior of the AI companies, _particularly_ the American ones? It's hard. Google abuses its monopoly position; Microsoft has forced harmful and terrible tooling on people at every turn; OpenAI is run by someone who actively despises art and does not understand it; and Anthropic is run by a guy who is trying to make sure the apocalypse has a pleasant demeanor and doesn't offend any corporations on the way. All of the above have scraped the web with no active consent — and that's largely fine, that's what putting things in common _is_, that's the beauty of the open information world we have the remnants of — but also actively evading measures people put in place to stop it and with absolutely no willingness to engage with the process. Extracting from the commons _is_ the tragedy of the commons.

It does not mean that enlarging the commons with the resulting tools is bad. The doctrine of original sin is a Christian concept I do not subscribe to. The concept of 'fruit of the poisonous tree' is a legal tool to fix power relations not a moral stance. They're worth understanding, but they are not absolute moral stances that are self-evident.

These are not harmless tools, but so too putting hard regulation and corporate, legalistic scrutiny on everything has a vastly negative impact: it is a yoke on human creativity and community to the reins of capital.

And, so too, disruption has huge costs. We are, apparently, committed to doing things the worst possible way. One can just hope that we capture the good too, because the ride has started and it's rather late to get off.

zkat@toot.cat ("Katerina Marchán") wrote:

RE: https://hachyderm.io/@petrillic/116337502667369228

I can’t have a conversation with any genAI boosters that is remotely productive precisely because of this.

Boosted by jwz:

developerjustin ("Justin Ferrell") wrote:

jscalzi@threads.net ("John Scalzi") wrote:

I suppose we don't want to make too much about the fact that the two cabinet positions Trump has fired to date are women

dysfun@treehouse.systems ("gaytabase") wrote:

i've been training my whole life for this. they taught us the modern major general's song in primary school.

NfNitLoop ("Cody Casterline 🏳️🌈") wrote:

Person: *describes an autistic trait of mine*

Me: Thanks!(later)

💭 Ohhh maybe they didn't mean that as a compliment.

dysfun@treehouse.systems ("gaytabase") wrote:

no you're singing gilbert and sullivan down voice notes to a drunk

dysfun@treehouse.systems ("gaytabase") wrote:

the true cost of being pep talked by a brit

Boosted by jwz:

0xabad1dea@infosec.exchange ("abadidea") wrote:

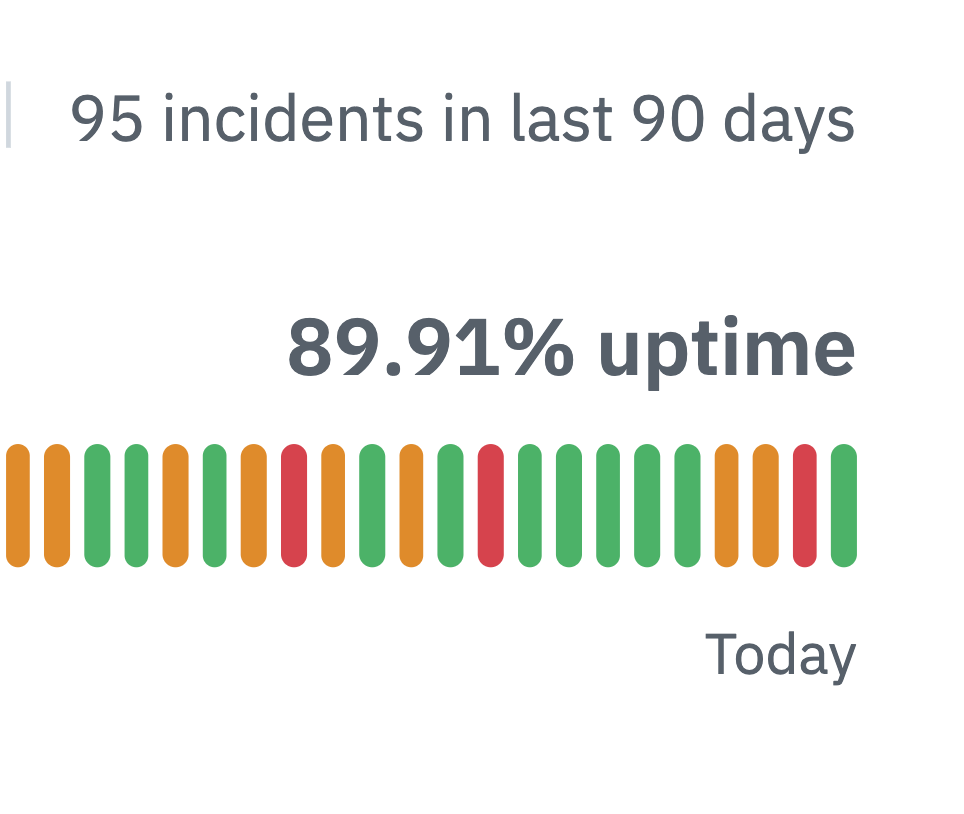

IT'S HAPPENING

GITHUB, THE FIRST ENTERPRISE CLOUD SOLUTION TO REACH ZERO NINES RELIABILITY

Boosted by zkat@toot.cat ("Katerina Marchán"):

downey@floss.social ("Michael Downey 🧢") wrote:

🚨 LinkedIn runs a silent browser scan on every Chrome user who visits the site. 6,222 extensions. ~405 million users affected. No consent, no disclosure, no mention in their privacy policy.

The scan identifies your sales tools, VPN, ad blocker, job search extensions, and extensions tied to religion, politics, and disability.

The full technical breakdown, legal analysis, and searchable database of every scanned extension:

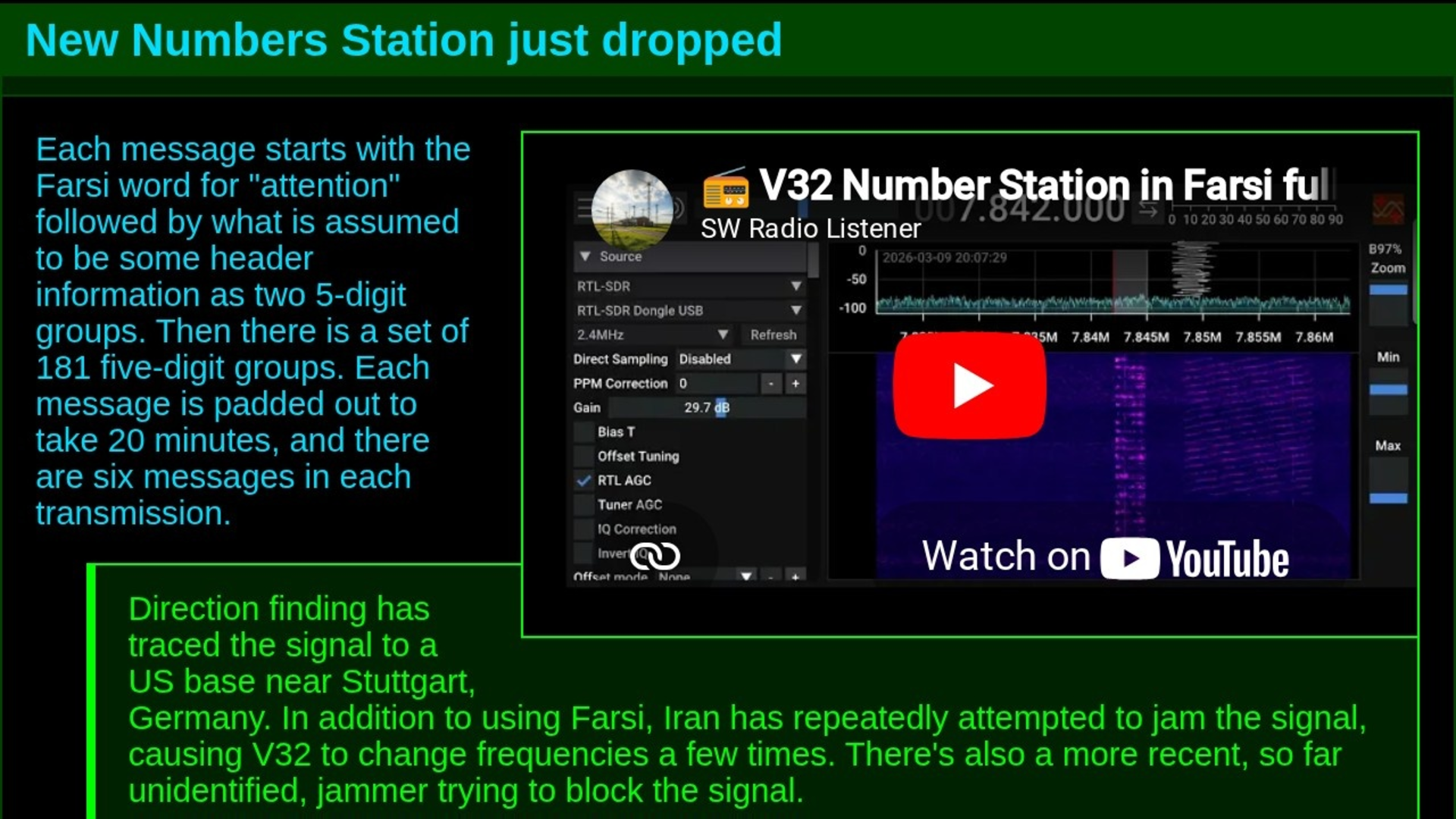

New Numbers Station just dropped.

Each message starts with the Farsi word for "attention" followed by what is assumed to be some header information as two 5-digit groups. Then there is a set of 181 five-digit groups. Each message is padded out to take...

https://jwz.org/b/yk5r

dysfun@treehouse.systems ("gaytabase") wrote:

the hidden dangers of german so-called 'friends'. don't be a victim

Boosted by slightlyoff@toot.cafe ("Alex Russell"):

timbray@cosocial.ca ("Tim Bray") wrote:

1. GenAI is probably going to impact us but how? Nobody knows.

2. The worst thing about GenAI isn't the technology, it's the shitty people: https://karlbode.com/the-problem-with-ai-is-shitty-human-beings [<must-read]

3. We can’t have a grown-up conversation on the subject because the trillion-dollar bet’s feer+greed pressure crowds out truth.

4. When the bubble pops, the shitty people will melt away. Then we can maybe figure it out.

5. We so *SO* need that bubble to pop. Next week would be ideal.

Boosted by glyph ("Glyph"):

swachter@toot.boston wrote:

I went to pottery class today and opened by asking several of my classmates if they knew how to use the slab roller upstairs. Nobody did but everyone wanted to, so when the teacher got in I’m like “hey teach, do you know how to use the slab roller upstairs” and she’s like “no” (she’s a visiting artist who only got here a couple months ago so this is not very surprising) “but I’ve used a lot of different ones and I bet we can figure it out.” Me: “do you want to go on a Journey of Discovery?” Everyone: yes. YES. *cue sickos faces* so we all thunder up the stairs periodically shouting JOURNEY OF DISCOVERY and our teacher snags another person who uses the slab roller A Lot and this person gives us a very thorough lesson and demonstration and every so often someone else wanders through and asks what’s happening and we all go JOURNEY OF DISCOVERY at them and now everyone knows how to use the slab roller and honestly I cannot recommend this approach enough as a way to make learning new skills and life generally more exciting.

dysfun@treehouse.systems ("gaytabase") wrote:

omg, i just got german music'd at

Boosted by glyph ("Glyph"):

cwebber@social.coop ("Christine Lemmer-Webber") wrote:

Welcome to this place of honor.

You can do anything at this place of honor, anything at all.

The only limit is yourself.

Welcome.

And welcome to you, who have come, to this place of honor!

Yes... welcome...

baldur@toot.cafe ("Baldur Bjarnason") wrote:

So, AFICT the “reasonable” argument for LLM coding is if we persist through economic chaos and environmental crises while sacrificing the creative industries and education then models built using stolen data and license violations might safely revolutionise software dev productivity?

Public sentiment against “AI” is sour enough already. If you want to make software developers as a class as broadly disliked as tax auditors, this’d be the way to do it.

aredridel@kolektiva.social ("Mx. Aria Stewart") wrote:

RE: https://hachyderm.io/@inthehands/116337385332834177

And even this: It's not a _purpose of genAI_, it's a _purpose of using genAI this way_. There are people doing this, and even this framing struggles to keep them in focus because of the effect. It's super effective way to wash accountability.

There's a reason I bang the 'these are tools' drum. Tools have users and operators. There are people with names and addresses who are doing this.

Boosted by aredridel@kolektiva.social ("Mx. Aria Stewart"):

inthehands@hachyderm.io ("Paul Cantrell") wrote:

The article correctly notes:

❝The constitutional question of who authorised this war and the legal question of whether this strike constitutes a war crime were displaced by a technical question that is easier to ask and impossible to answer in the terms it set. The Claude debate absorbed the energy.❞

…but fails to recognize that this was part of the •point• of having Claude in the chain. This isn’t just the press missing the ball (though it is that). This is an evil system working as designed.

/end

Boosted by aredridel@kolektiva.social ("Mx. Aria Stewart"):

inthehands@hachyderm.io ("Paul Cantrell") wrote:

The sour note: The article bends over backwards to absolve Claude — failing to recognize that one of the primary purposes of gen AI in large, dubious orgs is to obfuscate blame, to act as an accountability sink.

If there’s an LLM in the chain here, that’s •not• an afterthought. It’s central to the failure — even if the bad data didn’t originate with the LLM.

3/

Boosted by baldur@toot.cafe ("Baldur Bjarnason"):

uglyreykjavik.bsky.social@bsky.brid.gy ("Ugly Reykjavik") wrote:

Ice cream, anyone?#Iceland #photography #streetphotography #nature #landscape #abandoned #decay #grass #rust